The Weekly Insight Podcast – From Luddites to Language Models: Why the World Isn’t Ending

Fear. It’s back. Maybe it never left. But here we are again in an existential crisis for investors. Is Artificial Intelligence (AI) the greatest thing that’s ever happened to the world? Will AI be the end of the world? What companies will survive? What companies will perish?

These questions are being asked on every TV show, podcast, news article, or website that talks about the market. And the panic is- yet again – palpable. So, let’s dive right into it.

Don’t Be a Luddite: Humanity Hates Technology (At First)

What we see as technology today is light years beyond what early humans experienced. But still, the human response to new advancements has almost always been the same: fear.

Imagine the reaction of a caveman watching his buddy first strike a flint and create fire. He likely viewed him as a heretic. That example has repeated itself time and again in human history.

We can go back as far as Socrates. He believed that the written word would “implant forgetfulness in the souls” and warned that knowledge should only be transferred through face-to-face dialogue. The irony? The world only knows Socrates said this because Plato wrote it down.

Or there was the medical establishment in Britain when steam locomotives arrived. They warned that no human body could safely withstand speeds above 20 miles per hour. Even better, they feared that speeds above 50 MPH would cause passengers to “disintegrate”. My how they would love airplanes.

But the most famous example is the Luddites. You’ve probably heard the word “Luddite” as a swipe at someone who is a technophobe. It comes from conflicts centered on the English textile industry in the early 1800s.

While fully understanding the story requires detailed exposition on the politics and wars of the time (which we won’t force you to suffer through!), understand this: the Luddites were rebelling against new technological innovations that completely changed the textile industry. New power looms increased worker output by 40x. Mechanized cotton spinning increased output by 500x. This was a complete transformation of the industry.

And the workers rebelled. They were convinced that the new machines would displace their jobs and leave them penniless. So, they executed raids across many factories smashing the new machines. The government responded and countless Luddites were arrested, prisoned, and even executed.

The problem was the Luddites didn’t understand basic economics. Yes – productivity skyrocketed. But that didn’t mean less workers were needed. It meant that prices for textiles plummeted. Now, what could only be afforded by the very wealthy could be purchased by the masses. Demand for textiles exploded. Jobs weren’t destroyed – they were created.

To be fair to the Luddites, they weren’t entirely incorrect. Their jobs – as skilled weavers for example – were no longer needed. But the impact on employment in the broader textile industry was massive.

Which brings us to the impact on the market today. The violence isn’t playing out physically. But it is happening in stock prices. And while it may be accurate in very specific circumstances, the market – much like the Luddites – is missing the bigger picture.

Two Areas of Pain: AI Infrastructure & Downstream AI Disruption

There are many different arguments for the problems that AI could cause. The one that gets the most ink is the existential risk that AI will cause the extinction of humanity.

The people who are (and have) talked about it are not wild-eyed conspiracy theorists. Stephen Hawking once said, “The development of full artificial intelligence could spell the end of the human race.” Geoffrey Hinton, a Nobel Prize winner in physics and the man who built the foundation of modern AI, quit his job at Google in 2023 specifically so he could speak freely about AI risks. He is now estimating a 10 – 20% probability of human extinction caused by AI in the next 30 years.

And it’s not just the outsiders. The CEOs of OpenAI (maker of ChatGPT) and Anthropic (maker of Claude AI) both signed the 2023 Center for AI Safety statement that read “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

But here’s the thing: none of that matters. At least for your portfolio. Can we (or you) control it? Will your portfolio value matter if AI controlled Terminators are wiping us all out? Nope. So – at least at Insight – we’ve set that risk aside.

The other risks that are being cited by market analysts, however, are interesting. And, at least in how they’re playing out today, wildly illogical.

The first impact – which we’ve discussed in depth before – is what’s happening to the Big Hyperscalers. The switch from a largely cash-based investment to using debt to move forward their $600 billion (in this year alone) spend on AI infrastructure has had a significant impact on their stock prices. It all started with Meta’s Q3 earnings report, and the pain has been widespread. The market is incredibly concerned that the investment won’t be justified by future profits. Only Google has been spared.

Past performance is not indicative of future results.

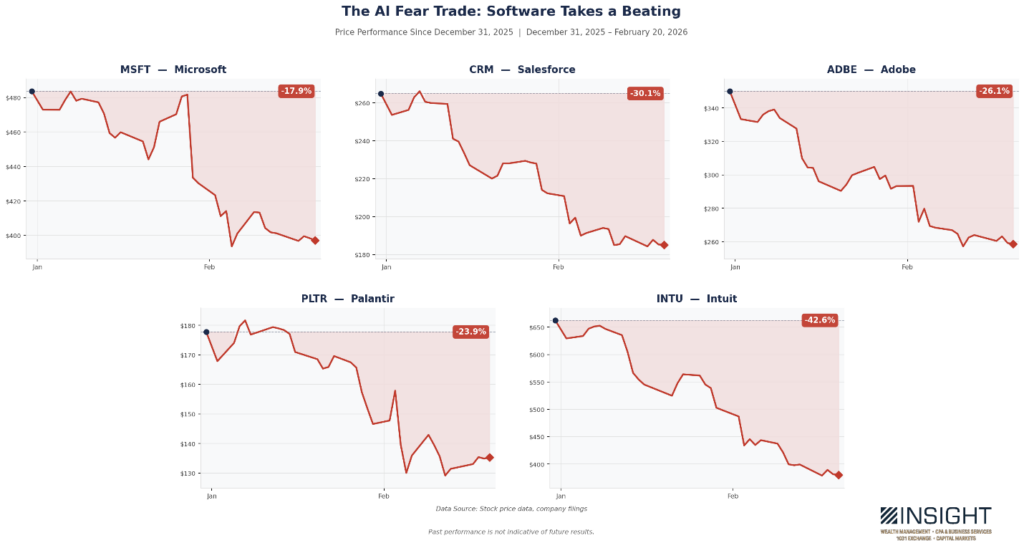

The other risk being cited is in the software industry. This pain started later and has been most prominent year-to-date. Essentially the market is asking “why would these companies have any value if AI can just whip up a new version on command?”

It’s a fair question. The answer will vary by company. There will be shrapnel, but the broad-brush condemnation of the entire sector misses the point entirely.

The pain, though, is palpable. Significant and longstanding companies have seen their prices drop by 15 – 40% year-to-date.

Past performance is not indicative of future results.

The good news is that neither of these trends are having a significant impact on Insight portfolios. But, more importantly, the idea that both problems can be happening simultaneously in the marketplace? It is completely illogical!

This was first pointed out earlier this month by HSBC’s head of U.S. technology research, Stephen Bersey. As he explained it, there are really two options here:

- AI Succeeds: If this happens, the massive investments by the Hyperscalers will be prescient and incredibly valuable. Those companies will succeed.

- AI Infrastructure is a bust: AI isn’t “inevitable.” It could be this is a massive waste of money (the bear case for the Hyperscalers). But if that’s true, the software companies will not be laid to waste.

Bersey goes a bit further on this. In his estimation, if AI succeeds, the demand for enterprise software systems will explode. You will need more software infrastructure to deploy, manage, and monetize AI at scale. The software companies will become the picks and shovels of AI monetization.

Whether he’s right on the last point remains to be seen. But the first two are clear: investors are betting negatively on both sides of the trade. They’re clearly going to be wrong on one of them.

So, What’s Next?

What’s next? For now, it will be more volatility as the market digests exactly what AI will mean for the economy. We are just now in the beginning spasms of what could be a world shifting change. There is much we don’t know about how it ends. But that doesn’t mean we can’t make money and work to avoid the disruption.

The Luddites weren’t stupid. They were skilled craftsmen watching their world change faster than they could adapt. Their mistake wasn’t recognizing the disruption — it was assuming the disruption was the whole story. The mills didn’t just destroy jobs. They created an industrial economy that lifted living standards for generations.

We’re in a similar moment today. It feels unprecedented – just like it did for the Luddites. And in those moments, the instinct is binary: embrace the future completely or reject it entirely. History shows us both instincts are wrong.

Much like with the textile mills, the disruption today is real. The fear is understandable. But the investors who will look back on this period with satisfaction won’t be the ones who predicted exactly which software company survived or which Hyperscalers spent wisely. They’ll be the ones who asked the quieter, less glamorous question: what does the physical world of an AI economy actually require? Power. Connectivity. Security. Materials. The unglamorous infrastructure of a technological revolution that, like every one before it, will need to be built before it can be monetized.

This is the work we’re doing in portfolios today. While the market debates whether the light at the end of the tunnel is a locomotive, we’re focused on who is building the tracks. That’s not fear. And it’s not greed. It’s simply understanding human history.

Sincerely,